TL;DR:

- AI significantly improves legal research speed by understanding natural language queries and summarizing documents.

- Human expertise remains essential to verify AI outputs due to accuracy limitations and hallucination risks.

- A hybrid workflow using AI for breadth and humans for depth offers the most effective and responsible approach.

Sifting through hundreds of cases, statutes, and regulatory filings is one of the most time-consuming bottlenecks in legal work. For small business owners and legal professionals alike, the pressure to find accurate answers fast is relentless. AI adoption in legal research is accelerating rapidly, with tools now capable of delivering 38-115% productivity gains depending on the workflow. This article walks through five practical, proven ways AI is reshaping legal research, what the benchmark data actually says, where these tools still fall short, and how to build a workflow that captures the upside without the risk.

Table of Contents

- How AI delivers smarter legal search and answers

- Automated case summarization and document review

- Benchmark results: How accurate and productive is legal AI?

- Where AI struggles: Limits, edge cases, and best uses

- Choosing the best AI tools and workflow for your legal research

- Our take: Why the hybrid human-AI model is the most powerful solution

- Get started with smarter, AI-powered legal research tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI speeds up legal search | AI tools streamline legal research by providing faster, more relevant case and statute identification. |

| Summarization and review gains | Automated case and document summaries help legal professionals and SMBs save significant review time. |

| Accuracy gaps exist | Even top AI platforms show lower accuracy and hallucination risks compared to human experts. |

| Human oversight is vital | The safest and most effective outcome comes from combining AI's speed with expert legal verification. |

| Choose tools wisely | Select legal AI tools based on your workflow, task complexity, and always pilot before relying on outputs. |

How AI delivers smarter legal search and answers

Traditional legal search relies on keyword matching. You type in a term, get a flood of loosely related results, and spend hours separating the relevant from the irrelevant. Modern AI changes that entirely.

Today's AI-powered legal search tools use natural language processing, meaning you can ask a question the way you would ask a colleague. Instead of typing "breach contract damages," you ask, "What remedies are available when a vendor breaches a fixed-price service contract?" The system understands context, intent, and legal relationships.

The underlying technology matters here. Natural language querying, automated summarization, and precedent identification are now standard features in leading platforms, powered by techniques like Retrieval-Augmented Generation (RAG) and large language models (LLMs) tuned for legal domains. RAG works by grounding AI responses in verified legal databases rather than generating answers from memory alone. The STARA system, developed for statutory reasoning, demonstrates high precision in surveying statutes across complex regulatory landscapes.

For legal professionals exploring legal research essentials, this shift means more targeted results and less time sifting. Tools like Lexis+ AI apply these approaches to deliver relevant precedents and statutory sections in seconds rather than hours.

Key capabilities you should look for in any AI legal search platform:

- Natural language query support

- Precedent identification and ranking

- Rapid statutory surveys across jurisdictions

- Source citation with verified legal databases

- Integration with existing AI legal tools

Pro Tip: Always phrase your queries as complete questions in plain English. Vague or overly technical prompts produce generic results. Specificity drives precision.

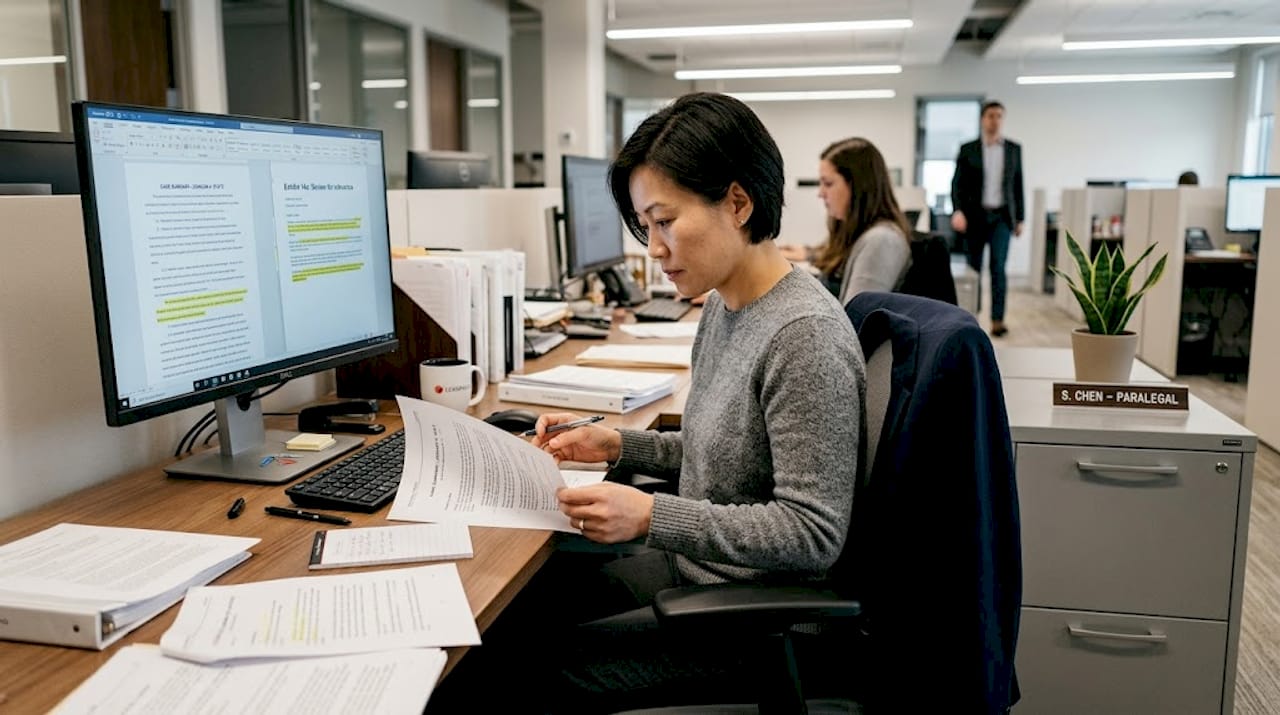

Automated case summarization and document review

Once AI helps you find the right cases, the next challenge is reading them. A single appellate opinion can run 40 to 80 pages. Multiply that across a contract dispute or compliance audit, and the reading load becomes unmanageable.

AI summarization tools solve this by condensing lengthy opinions, contracts, and regulatory filings into structured summaries in seconds. You get the holding, key facts, and relevant legal standards without reading every paragraph. For small firms and SMBs managing multiple matters with lean teams, this is a genuine force multiplier.

Vincent AI, a RAG-based platform, yields 38-115% productivity gains in legal review workflows, depending on task complexity. Separately, LLMs with retrieval and summarization tools have been shown to boost both user accuracy and speed in document review tasks.

Beyond summarization, AI-powered redlining and issue-spotting tools flag unusual clauses, missing provisions, and compliance gaps in contracts automatically. This is especially valuable during due diligence, where reviewing dozens of agreements under time pressure is the norm. You can learn more about how this works in practice with AI document drafting workflows.

Practical use cases for AI document review:

- Summarizing case law for client memos

- Flagging non-standard clauses in vendor contracts

- Identifying compliance risks in employment agreements

- Accelerating due diligence with document comparison tools

- Supporting multi-jurisdiction workflows with faster document triage

Pro Tip: For sensitive matters, always double-check AI summaries against the original document. Edge cases and jurisdiction-specific nuances sometimes get flattened in automated summaries.

Benchmark results: How accurate and productive is legal AI?

Before committing to any AI legal research tool, you need to understand what the data actually shows. The results are impressive in some areas and sobering in others.

Lexis+ AI achieves 65% accuracy, while Westlaw Precision reaches 42% on complex legal research benchmarks. Human experts, by comparison, score 92 to 97% accuracy but work significantly slower. Lexis+ AI outperforms both Westlaw Precision and CoCounsel in head-to-head benchmarks.

Meanwhile, hallucination rates for legal AI tools range from 17 to 33%. Hallucination means the AI generates a plausible-sounding but factually incorrect answer, such as citing a case that does not exist or misstating a statute's scope. That is a significant risk in any legal context.

Quick comparison: AI tools vs. human researchers

| Tool / Reviewer | Accuracy | Hallucination Risk | Speed |

|---|---|---|---|

| Lexis+ AI | 65% | Moderate | Very fast |

| Westlaw Precision | 42% | Higher | Fast |

| CoCounsel | Below Lexis | Higher | Fast |

| Human expert | 92-97% | Very low | Slow |

The productivity gains are real. 38-115% efficiency improvements are achievable, particularly for high-volume, lower-complexity tasks. But the accuracy gap means AI output should never go directly into a filing or client advice without review. Explore LexisNexis alternatives and AI tool benchmarks to compare your options before committing.

Key takeaways from the benchmark data:

- AI is fastest for initial research and document triage

- Human review remains essential for complex or high-stakes matters

- Document comparison tools add value when layered with human analysis

- Hallucination risk is highest on nuanced or multi-jurisdictional questions

Where AI struggles: Limits, edge cases, and best uses

The benchmark numbers tell part of the story. The more important question is: where does AI specifically break down, and what does that mean for your workflow?

AI struggles most with multi-jurisdictional complexity, analogical reasoning, conflicting provisions, and burden of proof analysis. Researchers describe this as the "jagged frontier" of legal AI, where performance is strong on some tasks and surprisingly weak on adjacent ones. A tool that accurately summarizes a contract clause may completely miss the implication of that clause under a specific state's case law.

"AI excels at summarization but often misses subtle legal distinctions."

Users must verify AI output, and expert input is what reduces risk in high-stakes matters. This is not a limitation that will disappear with the next model update. It reflects a fundamental difference between pattern recognition and legal reasoning.

The smartest workflow uses AI for breadth and humans for depth. AI finds and organizes; humans analyze and apply. Review our AI guidance workflow for a practical framework, and consult expert legal consultation resources when matters require professional judgment.

Edge cases and recommended reviewer:

| Task | AI Reliability | Recommended Reviewer |

|---|---|---|

| Basic statutory survey | High | AI with spot-check |

| Contract summarization | High | AI with human review |

| Multi-jurisdiction analysis | Low | Human expert required |

| Analogical legal reasoning | Low | Human expert required |

| Burden of proof arguments | Low | Human expert required |

| Due diligence document triage | Moderate | AI plus human sign-off |

Key limits to keep in mind:

- AI overconfidence is common, outputs sound authoritative even when wrong

- Conflicting statutes across jurisdictions trip up most current tools

- Ethical and strategic judgment cannot be automated

Choosing the best AI tools and workflow for your legal research

Knowing what AI can and cannot do is only useful if you translate it into a practical adoption strategy. Here is a straightforward approach for legal professionals and small businesses.

Start with affordable tools and integrate RAG-enhanced platforms where AI handles breadth and humans handle depth. This hybrid model is the most cost-effective entry point for SMBs.

- Define your research problems first. Know whether you need case summarization, statutory surveys, contract review, or compliance checks before evaluating any tool.

- Compare platform features and benchmark data. Use the accuracy and hallucination figures above as your baseline. Do not rely on vendor marketing alone.

- Pilot tools on non-critical research. Run AI on internal memos or low-stakes matters before using it on client-facing work.

- Integrate human review at every output stage. Build a checklist for verifying AI-generated summaries, citations, and clause flags.

- Set escalation policies. Define which matter types always require a licensed attorney to review AI output before it moves forward.

For a complete framework, the guide on building an AI workflow covers each of these steps in detail. You can also explore BXP Legal AI solutions for tools designed with these principles built in.

Pro Tip: Set clear, written verification and escalation policies for all AI-generated outputs before you go live. Informal "we'll check it" habits erode quickly under deadline pressure.

Our take: Why the hybrid human-AI model is the most powerful solution

The legal tech industry is prone to overclaiming. Every new model release gets positioned as a near-replacement for human legal expertise. We think that framing is both wrong and counterproductive.

Hybrid human-AI workflows save 40-55% of research time, but outputs must be verified. That verification step is not a bug in the system. It is the system. The professionals who treat AI as a drafting and research assistant, not an oracle, consistently outperform those who go all-in on automation.

There is also a skills erosion risk that rarely gets discussed. When junior associates stop reading full opinions because AI summarizes them, they lose the pattern recognition that comes from deep reading. That is a long-term cost that does not show up in short-term productivity metrics.

The advanced research methods that distinguish great legal professionals still require human judgment, contextual experience, and professional ethics. AI accelerates the low-level work. The competitive edge comes from pairing that speed with expert analysis, not from replacing one with the other. For small firms and business users, this hybrid model is the most practical and defensible path forward.

Get started with smarter, AI-powered legal research tools

If you are ready to put these insights into action, BXP Legal AI offers a straightforward starting point. The platform is designed for legal professionals and small business owners who need reliable AI-assisted research, document analysis, and compliance guidance without a steep learning curve.

Onboarding is simple, and the document comparison feature makes it easy to spot contract differences and flag compliance issues fast. Every output is framed as informational, with clear guidance to consult qualified legal professionals for specific matters. That transparency is built into the platform by design. Explore the tools, run a pilot on a real research task, and see how much time you recover in the first week.

Frequently asked questions

What types of legal research tasks does AI perform best?

AI excels at summarization and precedent identification but falls short on nuanced legal interpretation and analogical reasoning. Use it for high-volume, lower-complexity research tasks first.

How accurate are legal AI research tools compared to humans?

Lexis+ AI scores 65% accuracy while human experts reach 92-97% on complex legal benchmarks. AI is faster but requires human verification on anything high-stakes.

What are the risks of using AI for legal research?

Hallucination rates of 17-33% mean AI tools can generate confident but incorrect legal information. Every AI output should be reviewed by a qualified professional before use.

Which AI tools are recommended for small businesses starting legal research automation?

Affordable tools like CoCounsel and Paxton AI offer user-friendly, RAG-enhanced research and document review features suited to smaller teams and budgets.

Does AI legal research replace the need for legal professionals?

No. Hybrid human-AI workflows are most effective because AI handles speed and volume while expert oversight ensures accuracy, compliance, and sound legal judgment.