TL;DR:

- Legal research automation using retrieval-augmented generation provides verifiable, source-backed legal answers.

- Human oversight remains essential due to AI's error rates and potential for outdated or inaccurate information.

- Small and mid-sized legal teams can improve efficiency by piloting targeted AI tools, validating results, and building workflows around verified data.

Legal teams at small and mid-sized firms are under real pressure: more regulations, tighter budgets, and a flood of AI tools promising to fix everything overnight. The reality is that AI-powered legal research is more nuanced than a simple chatbot answering your questions. Knowing what these systems actually do, where they fail, and how to deploy them without exposing your organization to compliance risk is what separates teams that gain a genuine edge from those that create new problems while trying to solve old ones. This article gives you the honest, evidence-based picture.

Table of Contents

- What is legal research automation?

- Key tools and features in the market

- Limitations, compliance risks, and persistent challenges

- How small legal teams and SMEs can leverage automation effectively

- What most legal teams get wrong about automation

- Explore AI-powered legal guidance for your team

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Automation leverages verified AI | Modern legal research tools use AI systems like RAG to ground answers in trusted databases, enhancing reliability. |

| Human oversight still required | Despite automation, teams must verify findings and ensure data is current before relying on the results for compliance. |

| Best gains are in repetitive tasks | Legal research automation returns the greatest ROI on routine retrieval, summarization, and multi-source analysis work. |

| Edge cases and compliance risks remain | Even advanced systems can hallucinate and struggle with uncommon legal scenarios, so careful implementation is crucial. |

What is legal research automation?

Legal research automation is not just a smarter search bar. It refers to AI-driven systems that retrieve, synthesize, and summarize legal sources, including statutes, case law, and regulatory guidance, with far greater speed than any manual process. But the key word is system. These tools work through structured workflows, not open-ended conversation.

The technology most relevant to legal professionals right now is Retrieval-Augmented Generation, commonly called RAG. A RAG system does something that a standard large language model (LLM) like a general-purpose chatbot cannot: it pulls from a curated, authoritative database before generating an answer. That means when you ask a RAG-based legal tool about a specific regulation, it retrieves actual documents from sources like Westlaw or LexisNexis first, then synthesizes a response grounded in those documents.

Why does this matter? Because plain LLMs generate text based on patterns in their training data. They can confidently produce an answer that sounds authoritative but cites a case that does not exist. RAG is preferred over fine-tuned LLMs for legal research precisely because its outputs are verifiably grounded in real legal documents.

| Feature | RAG-based system | Plain LLM |

|---|---|---|

| Source grounding | Yes, retrieves from legal databases | No, relies on training data |

| Citation verifiability | High | Low to none |

| Hallucination risk | Lower (but not zero) | Higher |

| Data freshness | Depends on database updates | Fixed at training cutoff |

| Best use case | Legal research, compliance review | General drafting, brainstorming |

Automation does not mean full autonomy. You still need structured prompts, clear scope, and human review of every output. Think of it as a highly capable research associate who works fast but needs supervision. Understanding AI legal tools explained in depth helps you set realistic expectations before you commit to any platform.

Pro Tip: When evaluating any legal AI tool, ask the vendor directly: "What database does your system retrieve from, and how often is it updated?" If they cannot answer clearly, that is a red flag.

Key tools and features in the market

The two platforms that dominate enterprise-level legal research automation right now are CoCounsel (integrated with Westlaw Precision) and Lexis+ AI. Both support natural language queries and case summarization grounded in authoritative legal sources, which means you can ask a question in plain English and receive a structured, cited answer rather than a list of raw search results.

Here is how the leading tools compare on features most relevant to compliance teams:

| Tool | Natural language search | Case summarization | Source verification | Regulatory compliance focus |

|---|---|---|---|---|

| CoCounsel (Westlaw) | Yes | Yes | Westlaw Precision | Strong |

| Lexis+ AI | Yes | Yes | LexisNexis database | Strong |

| Harvey AI | Yes | Limited | Varies | Moderate |

| BXP Legal AI | Yes | Yes | Authoritative citations | SME-focused |

Beyond search and summarization, the features that matter most for compliance officers include:

- Instant source verification: Every answer should link back to a primary source you can check yourself.

- Regulatory update tracking: The tool should flag when a statute or regulation has changed recently.

- Multi-jurisdictional support: Critical for SMEs operating across state lines or internationally.

- Document analysis: The ability to upload a contract or policy and receive a compliance gap analysis.

- Audit trails: Logs of what was searched and what was returned, essential for demonstrating due diligence.

When you explore how AI supports legal research, you quickly realize that the tools doing the most useful work are the ones tightly integrated with verified databases, not the ones with the flashiest interfaces. Source freshness is not a minor technical detail. Stale data in a compliance context can mean missing a regulatory amendment that went into effect last quarter.

For teams new to this space, starting with essential legal research techniques gives you a framework for evaluating whether any tool is actually improving your process or just adding complexity.

Limitations, compliance risks, and persistent challenges

Here is where honest evaluation gets uncomfortable. RAG systems are better than open LLMs, but they are not reliable enough to use without oversight. RAG systems still hallucinate 17 to 34% of the time, and that error rate is especially dangerous in unsettled areas of law or novel situations where the database has limited relevant precedent.

The risk zones for compliance teams are specific and predictable:

- Outdated databases: If the vendor's data lags by even a few months, you may be researching against superseded regulations.

- Ambiguous multi-issue cases: When a legal question crosses multiple domains (employment law plus privacy law, for example), retrieval systems can miss the intersection.

- Edge cases: Fact patterns that do not fit standard legal categories often return generic answers that look authoritative but are not applicable.

- Overconfidence in output: The biggest practical risk is not the AI making an error. It is the human accepting the output without verification.

"Hybrid human-AI approaches best manage compliance and accuracy, especially in regulated environments." This is not a caveat. It is the operating model that expert analysis consistently recommends for any team where errors carry legal or regulatory consequences.

Real-world failures tend to follow a pattern: a team under time pressure accepts an AI-generated summary without checking the underlying source, the summary contains a subtle error about a regulatory threshold, and the error propagates into a compliance memo or contract. The fix is not to avoid automation. It is to build verification into the workflow as a non-negotiable step.

When you consult legal experts alongside AI tools, you create a check that catches what the system misses. Similarly, understanding AI document drafting challenges helps you design workflows that account for where automation is weakest.

Pro Tip: Build a simple verification checklist for every AI-generated research output: confirm the cited source exists, check the publication date, and verify the holding applies to your jurisdiction. This takes five minutes and eliminates the most common failure modes.

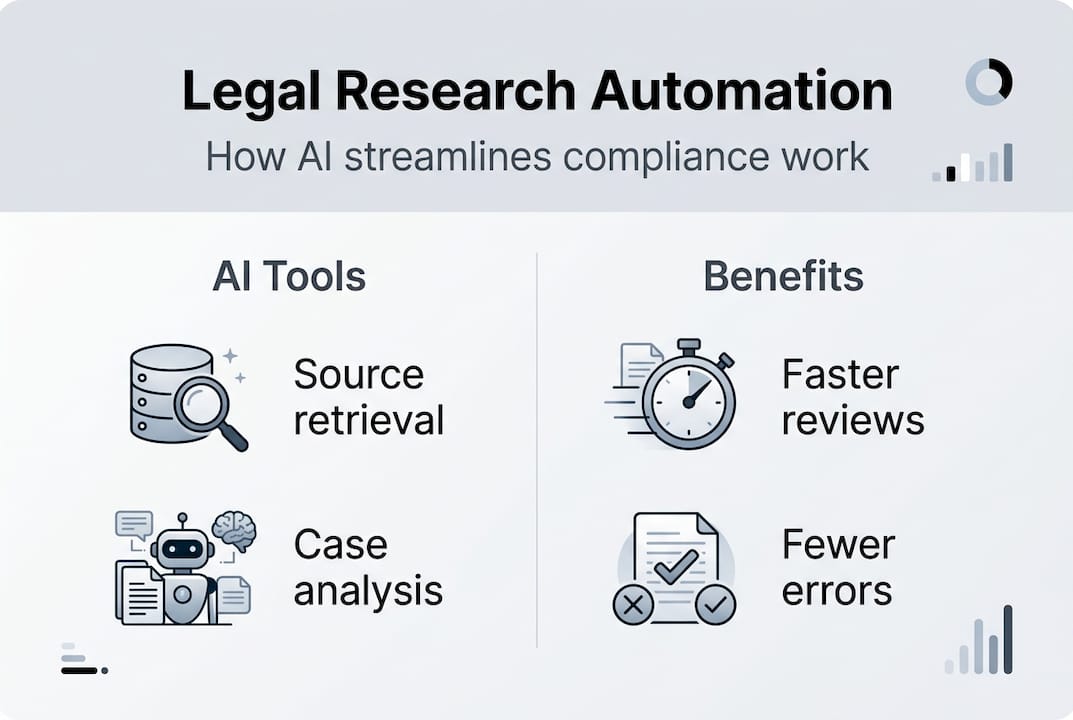

How small legal teams and SMEs can leverage automation effectively

Smaller organizations often assume that enterprise-grade legal AI is out of reach, or that the ROI does not justify the learning curve. The evidence says otherwise. Firms report 38 to 140% productivity gains with automation, but those gains depend entirely on matching the tool to the workflow and actually measuring the impact on accuracy and compliance outcomes.

Here is what works for lean legal teams:

Do:

- Choose tools with grounded, verifiable outputs (RAG-based, not plain LLM).

- Run a 30-day pilot using real tasks from your current workload, not demo scenarios.

- Track two metrics: time saved per research task and error rate compared to manual research.

- Establish a human review step for every output before it enters a document or memo.

- Use automation for repetitive retrieval, bulk regulation scanning, and first-draft summarization.

Do not:

- Deploy a tool firm-wide before validating it on your specific practice areas.

- Accept vendor claims about accuracy without requesting benchmark data.

- Use automation for final legal opinions or advice without attorney review.

- Ignore data recency. Ask vendors how frequently their databases are updated.

Building document automation workflows around these principles frees your senior legal staff to focus on complex analysis, client strategy, and judgment calls that AI genuinely cannot make. That is the real productivity gain: not replacing legal expertise, but removing the repetitive work that crowds it out.

For teams looking to scale, smarter AI guidance for legal teams covers how to build a governance framework around your AI tools so that compliance stays intact as usage grows. Pairing that with AI-driven research techniques gives your team a complete operational playbook.

Pro Tip: Demand a vendor transparency report covering data sources, update frequency, and known error rates before signing any contract. Reputable vendors provide this. Those who resist are telling you something important.

What most legal teams get wrong about automation

The most common mistake is treating legal AI as a "set-and-forget" solution. Teams deploy a tool, see impressive early results, and then stop monitoring it. Months later, they discover the database has not been updated, the prompts have drifted from best practice, or the tool is being used for tasks it was never designed to handle.

The second mistake is expecting end-to-end automation. Most practitioners overestimate AI's ability to replace legal reasoning and underestimate how task-specific these tools actually are. A system that excels at summarizing employment case law may perform poorly on novel contract disputes. That is not a flaw. It is a design reality.

The teams that actually outperform their peers with AI are the ones that benchmark continuously, validate outputs against known cases, and refine their prompts and workflows iteratively. They treat automation as a process, not a product. They also hold vendors accountable for data quality and update schedules.

The real competitive advantage of automation is not speed alone. It is the ability to redirect expert attorney and compliance officer time toward substantive analysis, strategic advice, and the judgment-heavy work that genuinely requires a trained legal mind. Building an AI legal guidance workflow around that principle is what separates the teams getting lasting value from those stuck in a cycle of hype and disappointment.

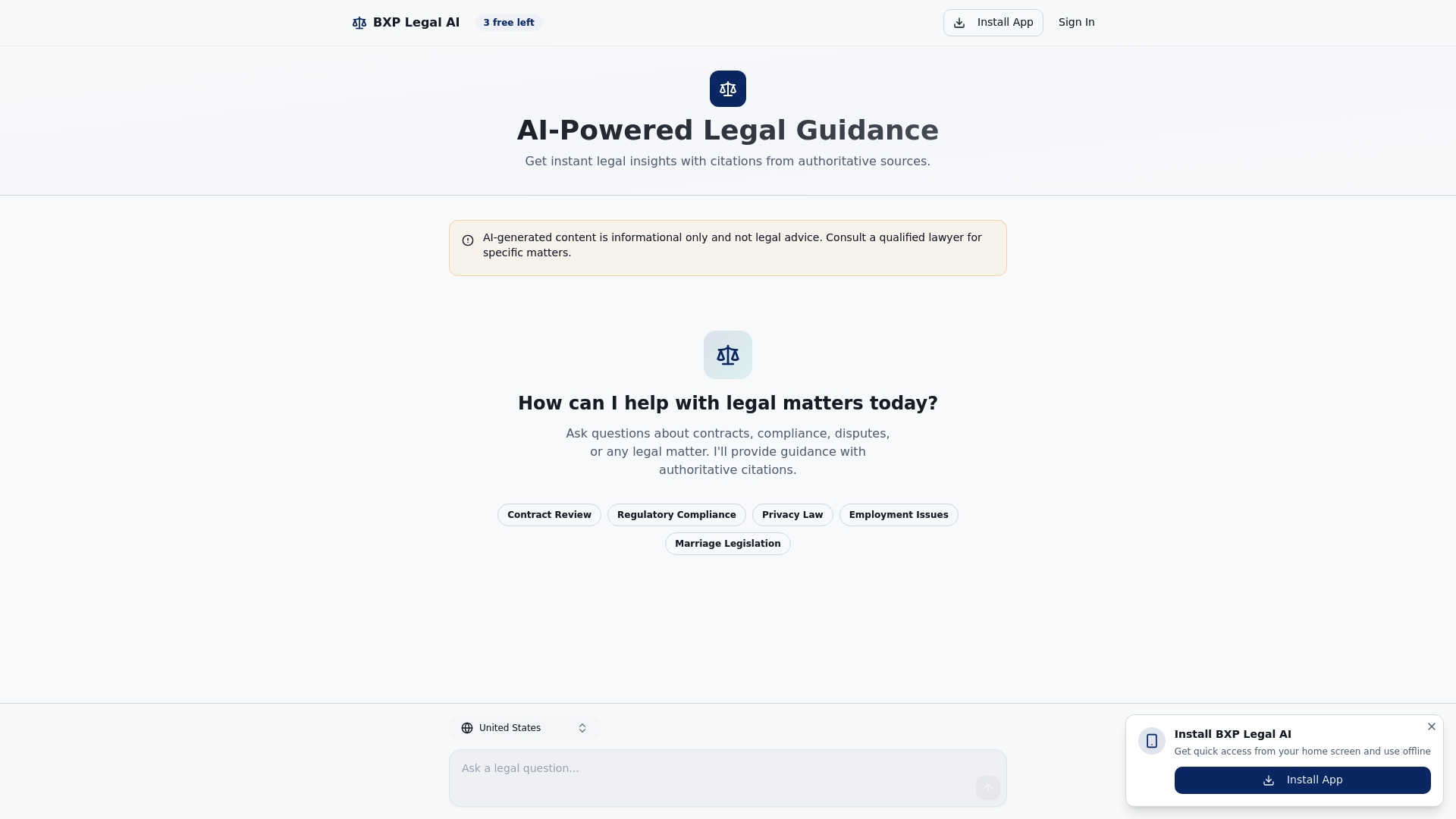

Explore AI-powered legal guidance for your team

If you are ready to move from evaluating legal AI to actually implementing it, the BXP Legal AI platform is built specifically for the compliance and research needs of legal professionals at small and mid-sized organizations. Every response is grounded in authoritative citations, so you get verifiable answers, not confident guesses.

Features like automated document comparison streamline contract and compliance reviews that would otherwise consume hours of attorney time. Whether you are piloting AI for the first time or scaling an existing workflow, BXP Legal gives your team the grounded, transparent support it needs to improve efficiency without sacrificing compliance integrity.

Frequently asked questions

How does legal research automation improve compliance?

Automation tools built on RAG retrieve answers directly from trusted databases like Westlaw before generating a response, giving compliance teams verifiable, source-backed answers rather than ungrounded AI output. This makes it far easier to demonstrate due diligence in regulated environments.

Are automated legal research tools error-free?

No. Even the best RAG-based systems hallucinate 17 to 34% of the time, and errors increase in ambiguous or novel legal situations. Human verification of every output is not optional; it is essential.

Which tasks benefit most from legal research automation?

Repetitive retrieval, bulk caselaw summarization, and regulatory scanning are where automation delivers the clearest gains. These tasks free up attorney time for the complex reasoning that AI struggles with and that genuinely requires legal expertise.

How should SMEs evaluate legal research automation solutions?

Run a structured pilot using real firm tasks, not vendor demos. Measure time saved and error rates, review how frequently the vendor updates its data, and assess compliance impact on actual matters before committing to a full rollout.